The story of steel begins long before bridges, I-beams, and skyscrapers. It begins in the stars.

Billions of years before humans walked the Earth—before the Earth even existed—blazing stars fused atoms into iron and carbon. Over countless cosmic explosions and rebirths, these materials found their way into asteroids and other planetary bodies, which slammed into one another as the cosmic pot stirred. Eventually, some of that rock and metal formed the Earth, where it would shape the destiny of one particular species of walking ape.

On a day lost to history, some fortuitous humans found a glistening meteorite, mostly iron and nickel, that had barreled through the atmosphere and crashed into the ground. Thus began an obsession that gripped the species. Over the millennia, our ancestors would work the material, discovering better ways to draw iron from the Earth itself and eventually to smelt it into steel. We’d fight over it, create and destroy nations with it, grow global economies by it, and use it to build some of the greatest inventions and structures the world has ever known.

Metal From HeavenKing Tut had a dagger made of iron—a treasured object in the ancient world worthy of few more than a pharaoh. When British archaeologist Howard Carter found Tutankhamun’s tomb nearly a century ago and laid eyes on this object, it was clear the dagger was special. What archaeologists didn’t know at the time was that the blade came from space.

Iron that comes from meteorites has a higher nickel content than iron dug up from the ground and smelted by humans. In the years since Carter’s big discovery, researchers have found that not only King Tut’s dagger but also virtually all iron goods dating to the Bronze Age were made from iron that fell from the sky.

To our ancestors, this exotic alloy must have seemed like it was sent by entities beyond our understanding. The ancient Egyptians called it biz-n-pt. In Sumer, it was known as an-bar. Both translate to “metal from heaven.” The iron-nickel alloy was supple and easily hammered into shape without breaking. But there was an extremely limited supply, brought to Earth only by the occasional extraterrestrial delivery, making this metal of the gods more valuable than gems or gold.

It took thousands of years before humans started looking beneath their feet. Around 2,500 BC, tribesmen in the Near East discovered another source of dark metallic material hidden underground. It looked just like the metal from heaven—and it was, but something was different. The iron was mixed with stones and minerals, lumped together as ore. Extracting iron ore wasn’t like picking up a stray piece of gold or silver. To remove iron from the subterranean realms was to tempt the spirit world, so the first miners conducted rituals to placate the higher powers before digging out the ore, according to the 1956 book The Forge and the Crucible.

But pulling iron ore from the Earth was only half the battle. It took the ancient world another 700 years to figure out how to separate the precious metal from its ore. Only then would the Bronze Age truly end and the Iron Age begin.

The Long Road to the First SteelTo know steel, we must first understand iron, for the metals are nearly one and the same. Steel contains an iron concentration of 98 to 99 percent or more. The remainder is carbon—a small additive that makes a major difference in the metal’s properties. In the centuries and millennia before the breakthroughs that built skyscrapers, civilizations tweaked and tinkered with smelting techniques to make iron, creeping ever closer to steel.

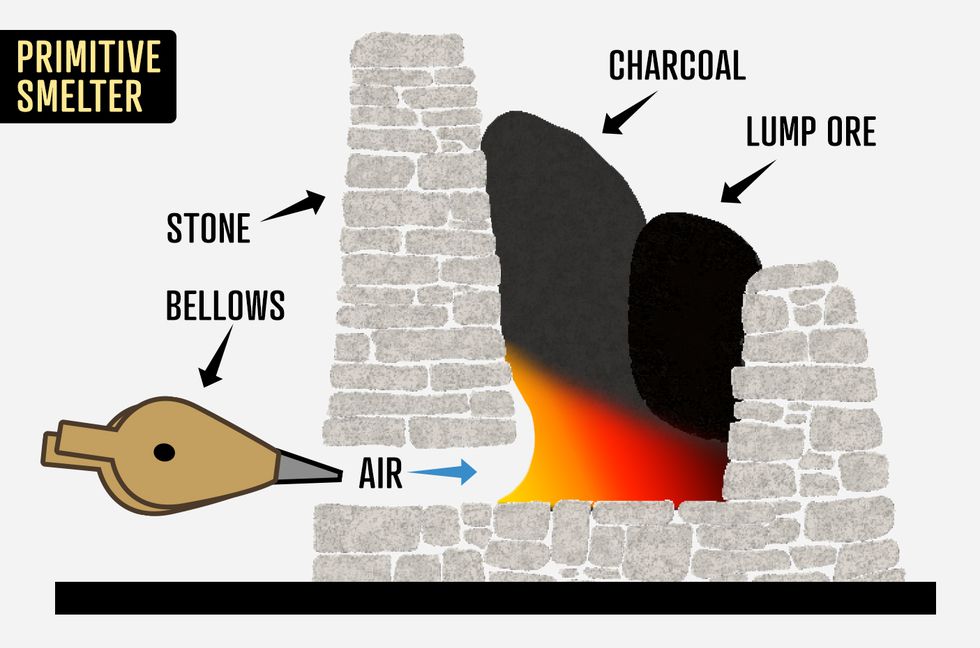

MICHAEL STILLWELL

MICHAEL STILLWELLAround 1,800 BC, a people along the Black Sea called the Chalybes wanted to fabricate a metal stronger than bronze something that could be used to make unrivaled weapons. They put iron ores into hearths, hammered them, and fired them for softening. After repeating the process several times, the Chalybes pulled sturdy iron weapons from the forge.

What the Chalybes made is called wrought iron, one of a couple major precursors to modern steel. They soon joined the warlike Hittites, creating one of the most powerful armies in ancient history. No nation’s weaponry matched a Hittite sword or chariot.

Steel’s other younger sibling, so to speak, is cast iron, which was first made in ancient China. Beginning around 500 BC, Chinese metalworkers built seven-foot-tall furnaces to burn larger quantities of iron and wood. The material was smelted into a liquid and poured into carved molds, taking the shape of cooking tools and statues.

Neither wrought nor cast iron was quite the perfect mixture, though. The Chalybes’ wrought iron contained only 0.8 percent carbon, so it did not have the tensile strength of steel. Chinese cast iron, with 2 to 4 percent carbon, was more brittle than steel. The smiths of the Black Sea eventually began to insert iron bars into piles of white-hot charcoal, which created steel-coated wrought iron. But a society in South Asia had a better idea. India would produce the first true steel.

Around 400 BC, Indian metalworkers invented a smelting method that happened to bond the perfect amount of carbon to iron. The key was a clay receptacle for the molten metal: a crucible. The workers put small wrought iron bars and charcoal bits into the crucibles, then sealed the containers and inserted them into a furnace. When they raised the furnace temperature via air blasts from bellows, the wrought iron melted and absorbed the carbon in the charcoal. When the crucibles cooled, ingots of pure steel lay inside.

An example of an early clay crucible discovered in Germany. SSPL/GETTY IMAGES

An example of an early clay crucible discovered in Germany. SSPL/GETTY IMAGESIndia’s ironmasters shipped their "wootz steel" across the world. In Damascus, Syrian smiths used the metal to forge famous, almost mythological “Damascus steel” swords, said to be sharp enough to cut feathers in midair (and inspiring fictional supermaterials like the Valyrian steel of Game of Thrones). Indian steel made it all the way to Toledo, Spain, where smiths hammered out swords for the Roman army.

In shipments to Rome itself, Abyssinian traders from the Ethiopian Empire served as deceitful middlemen, deliberately misinforming the Romans that the steel was from Seres, the Latin word for China, so Rome would think that the steel came from a place too distant to conquer. The Romans called their purchase Seric steel and used it for basic tools and construction equipment in addition to weaponry.

Iron’s days as a precious metal were long over. The fiercest warriors in the world would now carry steel.

Holy Swords and Samurai Steel

According to legend, the great sword Excalibur was imposing and beautiful. The word means "cut-steel." But it wasn’t steel. From the age of King Arthur through Medieval times, Europe lagged behind in iron and steel production.

As the Roman Empire fell (officially in 476), Europe spun into chaos. India still made its sensational steel, but it couldn’t reliably ship the metal to Europe, where the roads were unkempt, merchants were ambushed, and people feared plague and illnesses. In Catalonia, Spain, ironworkers developed furnaces similar to those in India; the “Catalan furnace” produced wrought iron, and lots of it—enough metal to make horseshoes, wheels for carriages, door hinges, and even steel-coated armor.

Knights brandished specially crafted swords. They were forged by twisting rods of iron, a process that left unique herringbone and braided patterns in the blades. The Vikings interpreted the designs as dragon coils, and swords like King Arthur’s Excalibur and El Cid’s Tizona became mythological.

The best swords in the world, however, were made on the other side of the planet. Japanese smiths forging blades for the samurai developed a masterful technique to create light, deadly sharp blades. The weapons became heirlooms, passed down through generations, and few gifts in Japan were greater. The forging of a katana was an intricate and ritualized affair.

Japanese smiths washed themselves before making a sword. If they were not pure, then evil spirits could enter the blade. The metal forging began with wrought iron. A chunk of the material was heated with charcoal until it became soft enough to fold. After it cooled, the iron was heated and folded about 20 more times, giving the blade its arcing shape, and all through the forging and folding, the wrought iron’s continued exposure to carbonaceous charcoal turned the metal into steel.

Katana signed by Masamune, considered Japan’s greatest swordsmith from the Kamakura period, 14th century.

Katana signed by Masamune, considered Japan’s greatest swordsmith from the Kamakura period, 14th century.

TOKYO NATIONAL MUSEUM AT UENOA swordsmith used clay, charcoal, or iron powder for the next step, brushing the material along the blade to shape the final design. Patterns emerged in the steel that were similar to wood grain with swirling knots and ripples. The details were even finer than the dragon scales of European blades, and Japanese katanas were given names like “Drifting Sand,” “Crescent Moon,” and "Slayer of Shuten-doji," a mythological beast in Japanese lore. Five blades that remain today, the Tenka-Goken, or “Five Swords Under Heaven,” are kept as national treasures and holy relics in Japan.

Of Iron and CoalThe first blast furnace looked like an hourglass.

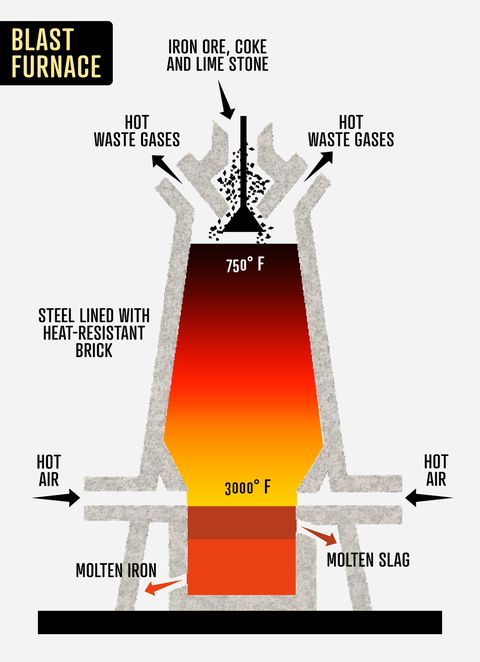

Along the Rhine Valley in present-day Germany, metalworkers developed a contraption that stood about 10 feet high, with two bellows placed at the bottom, to accommodate larger quantities of iron ore and charcoal. The blast furnace got blazing hot, the iron absorbed more carbon than ever, and the mixture turned into cast iron that could be easily poured into a mold.

MICHAEL STILLWELL

MICHAEL STILLWELL

It was the ironmaking process the Chinese had practiced for 1,700 years—but with a bigger pot. Workers dug trenches on the foundry floor that branched out from a long central channel, making space for the liquid iron to flow. The trenches resembled a litter of suckling piglets, and thus a nickname was born: pig iron.

Iron innovation came just in time for a Western world at war. The invention of cannons in the 13th century and firearms in the 14th century generated a hunger for metal. Pig iron could be poured right into cannon and gun barrel molds, and Europe started pumping out weapons like never before.

But the iron boom created a problem. As European powers began to stretch their power across the globe, they used up tremendous amounts of timber, both to build ships and to make charcoal for smelting. A single English furnace required about 240 acres of trees per year, according to the book Steel: From Mine to Mill, the Metal That Made America by Brooke C. Stoddard. The British Empire turned to the untapped resources of the New World for a solution and began shipping metal smelted in the American colonies back across the Atlantic. But smelting iron in the colonies destroyed business for the ironworks in England.

The answer to Britain’s fuel woes came from a cast iron pot maker. Abraham Darby spent much of his childhood working in malt mills, and in the early 1700s, he remembered a technique from his days of grinding barley: roasting coal, a combustible rock. Others had tried smelting iron with coal, but Darby was the first to roast the coal before smelting. Roasted coal maintained its heat far longer than charcoal and allowed smiths to create a thinner pig iron—perfect for pouring into gun molds. Today, Darby’s large blast furnace can be seen at the Coalbrookdale Museum of Iron.

England had discovered the power of smelting with coal. But it still wasn’t making steel.

The Clockmaker and the CrucibleBenjamin Huntsman was frustrated with iron. The alloys available to the clockmaker from Sheffield varied too much for his work, particularly fabricating the delicate springs.

An untrained eye doctor and surgeon in his spare time, Huntsman experimented with iron ore and tested different ways of smelting it. Eventually he came up with a process quite similar to the ancient Indian method of using a clay crucible. However, Huntsman’s technique had two key differences: He used roasted coal rather than charcoal, and instead of placing the fuel inside the crucible, he heated iron and carbon mixtures over a bed of coals.

The ingots that emerged from the smelter were more uniform, stronger, and less brittle–the best steel that Europe, and perhaps the world, had ever seen. By the 1770s, Sheffield became the national fulcrum of steel manufacturing. Seven decades later, the whole country knew the process, and the steelworks of England burned bright.

In 1851, one of the first world's fairs was held in London, the Great Exhibition of the Works of Industry of All Nations. The Crystal Palace was built with cast iron and glass for the event, and almost everything inside was made of iron and steel. Locomotives and steam engines, water fountains and lampposts, anything and everything that could be cast from molten metal was on display. The world had never seen anything like it.

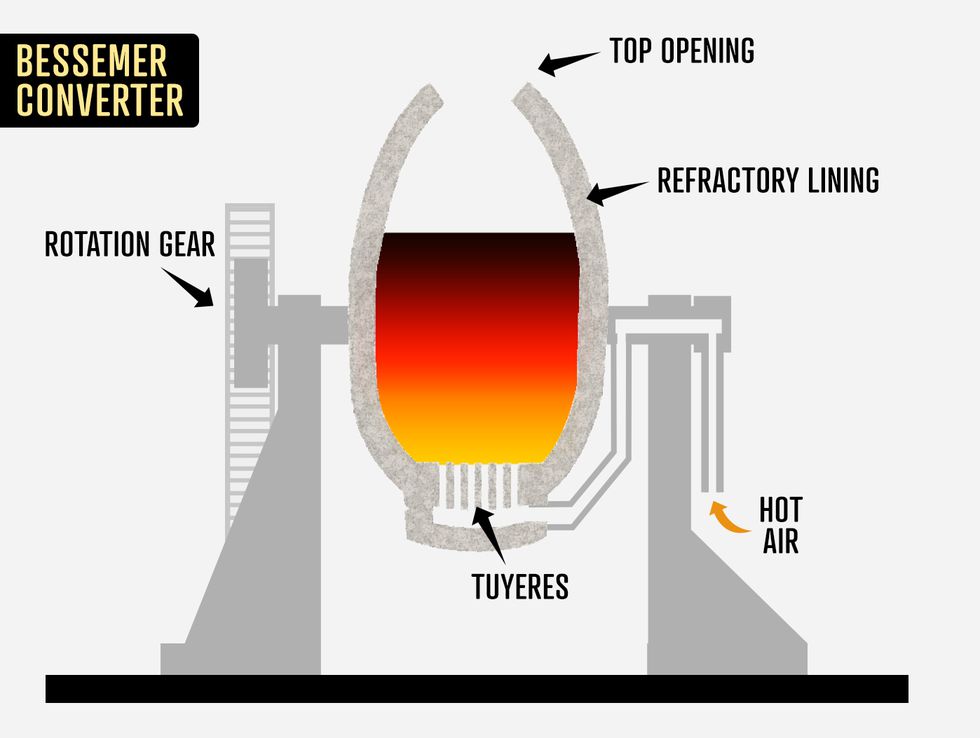

The Bessemer BreakthroughHenry Bessemer was a British engineer and inventor known for a number of unrelated inventions, including a gold brass-based paint, a keyboard for typesetting machines, and a sugarcane crusher. When the Crimean War broke out in Eastern Europe in the 1850s, he built a new elongated artillery shell. He offered it to the French military, but the traditional cast iron cannons of the time were too brittle to fire the shell. Only steel could handle the controlled explosion.

The crucible steelmaking process was much too expensive to produce items as large as cannons, so Bessemer set out to find a way to produce steel in larger quantities. One day in 1856, he decided to pour pig iron into a container rather than let it ooze into a trench. Once inside the container, Bessemer blasted air through perforations on the bottom. According to Steel: From Mine to Mill, everything remained calm for about 10 minutes, and then suddenly sparks, flames, and molten pig iron came bursting from the container. When the chaos ended, the material left in the container was carbon-free, pure iron.

Oil painting by E.F. Skinner showing steel being produced by the Bessemer Process at Penistone Steel Works,

Oil painting by E.F. Skinner showing steel being produced by the Bessemer Process at Penistone Steel Works,

South Yorkshire. Circa 1916. GETTY IMAGESSSPLThe impact of this explosive smelting incident is hard to overstate. When Bessemer used the bellows directly on the molten pig iron, the carbon bonded with the oxygen from the air blasts, leaving behind pure iron that—through the addition of carbon-bearing materials such as spiegeleisen, an alloy of iron and manganese—could easily be turned into high-quality steel.

Bessemer built a machine to carry out the procedure: the “Bessemer Converter.” It was shaped like an egg with an interior clay lining and an exterior of solid steel. At the top, a small opening spewed flames 30 feet high when the air blasted into the furnace.

Almost immediately, though, a problem arose in Britain's ironworks. It turned out that Bessemer had used an iron ore containing very little phosphorus, while most iron ore deposits are rich in phosphorus. The old methods of iron smelting reliably removed the phosphorus, but the Bessemer Converter did not, producing brittle steel.

MICHAEL STILLWELL

MICHAEL STILLWELLThe issue vexed metallurgists for two decades, until a 25-year-old British police clerk and amateur chemist, Sidney Gilchrist Thomas, found a solution to the phosphorus problem. Thomas discovered that the device’s clay lining was not reactive with phosphorus, so he replaced the clay with a lime-based lining. It worked like a charm. The new method, which churned out five tons of steel in 20 minutes, could now be used across England’s ironworks. The old Huntsman crucible process, which produced a paltry 60 pounds of steel in two weeks, was obsolete. The Bessemer Converter was the new king of steel.

American SteelOn the other side of the Atlantic, massive iron ore deposits remained untapped in the American wilderness. In 1850, the United States was producing only a fifth as much iron as Britain. But after the Civil War, industrialists began turning their attention to the Bessemer process, sparking a steel industry that would generate vastly more wealth than the 1849 California Gold Rush. There were roads to build between cities, bridges to construct over rivers, and railroad tracks to lay into the heart of the Wild West.

Andrew Carnegie wanted to build it all.

No one accomplished the American dream quite like Carnegie. The Scottish immigrant arrived in the country at age 12, settling in a poor neighborhood of Pittsburgh. Carnegie began his ascent as a teenage messenger boy in a telegraph office. One day, a high-ranking official at the Pennsylvania Railroad Company, impressed by the hardworking teen, hired Carnegie to be his personal secretary.

The “Star-Spangled Scotsman” developed a business acumen and worked his way up the ladder in the railroad industry, making some savvy investments along the way. He owned stakes in a bridge-building company, a rail factory, a locomotive works, and an iron mill. When the Confederacy surrendered in 1865, the 30-year-old Carnegie turned his attention to building bridges. Thanks to his mill, he had the mass production of cast iron at his disposal.

But Carnegie knew he could do better than cast iron. A durable bridge needed steel. About a decade before Sidney Thomas refined the Bessemer Converter with a lime-based lining, Carnegie brought the Bessemer process to America and acquired phosphorus-free iron to produce steel. He established a steel mill in Homestead, Pennsylvania, to manufacture the alloy for a new type of building that architects called “skyscrapers.” In 1889, all of Carnegie’s holdings were consolidated under one name: the Carnegie Steel Company.

By this point, Carnegie was single-handedly producing about half as much steel as all of Britain. Additional steel companies started sprouting up around the country, creating new towns and cities, including an iron mining town in Connecticut named "Chalybes" after the ironmakers of antiquity.

America was suddenly steamrolling its way to the top of the steel industry. But things were about to get rocky at Carnegie’s Homestead Steel Works, right across the Monongahela River from Pittsburgh.

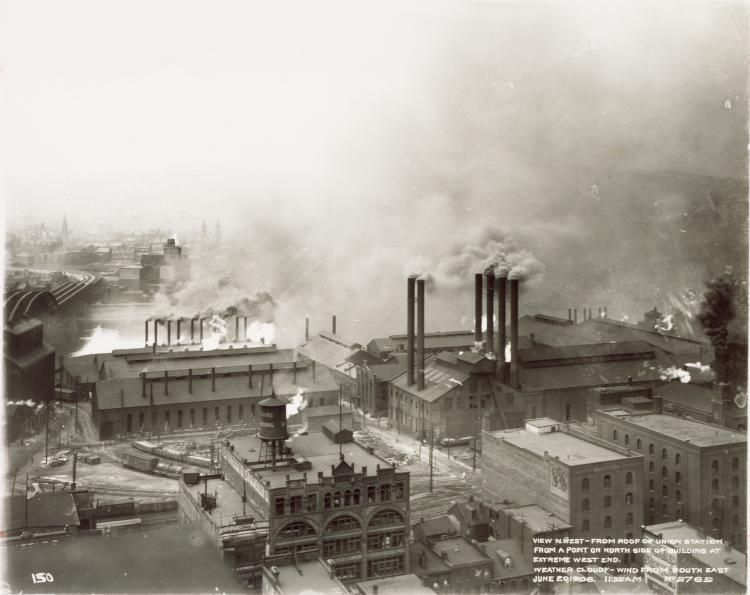

To keep manufacturing costs down, wages were low. The salary for the 84-hour workweek was less than $10 in 1890 (about $250 today)—and that for backbreaking labor in the hot steel mills. Accidents were common, and in Pittsburgh, the air was so heavily polluted that a writer for The Atlantic Monthly called Steel City, “hell with the lid taken off.”

Pittsburgh’s Strip District neighborhood looking northwest from the roof of Union Station. NASA

Pittsburgh’s Strip District neighborhood looking northwest from the roof of Union Station. NASAIn July 1892, tensions boiled over between the Carnegie Steel Company and the union representing workers at the Homestead mill. The company chair, Henry Clay Frick, took a hard stance, threatening to cut wages. The workers hanged an effigy of Frick, and he responded by surrounding the mill with three miles of barbed-wire fence, expecting hostilities. The workers voted to strike and were subsequently fired, leading to a nickname for the fenced mill: “Fort Frick.”

About 3,000 strikers took control of Homestead, forcing out local law enforcement. Frick hired 300 agents from the Pinkerton Detective Agency to guard the mill, and on the morning of July 6, 1892, a civil battle ensued. Men gathered at the riverbank, throwing rocks and firing guns at the Pinkerton agents trying to get ashore in boats. The strikers used whatever they could find as weapons, rolling out an old cannon, igniting dynamite, and even pushing a burning train car into the boats.

Order was restored when a National Guard battalion of 8,500 entered the town and placed Homestead under martial law. Ten people were killed in the clash. Frick was later shot and stabbed in his office by an anarchist who heard of the strike, but survived. He left the company shortly after, and in 1897, Carnegie hired an engineer named Charles M. Schwab (not to be confused with the founder of the Charles Schwab Corporation) to serve as the new president. In 1901, Schwab convinced Carnegie to sell his steel company for $480 million. Schwab’s new company merged with additional mills to form the United States Steel Corporation.

The American steel industry continued to explode into the 20th century. In 1873, the United States produced 220,000 tons of steel. By 1900, America accounted for 11.4 million tons of steel, more than the British and successful German industries combined. The new United States Steel Corporation was the largest company in the world, manufacturing two-thirds of the nation’s steel.

It was a rate of production never before seen across the globe, but the steel foundries were just getting warmed up.

Metal of War and PeaceDisagreements at U.S. Steel led Charles Schwab to find a new job presiding over a different, rapidly growing company: Bethlehem Steel. In 1914, two months into the Great War, Schwab received a secret message from the British War Office. Hours later, he bought a ticket to cross the Atlantic under a false name. In Europe, he met with England’s Secretary of State for War who wished to place a large order—with a catch. The British wanted Bethlehem to build $40 million in weaponry for England, and do no business with the Crown’s enemies. Schwab accepted and went to his next meeting, this one with the First Lord of the Admiralty, Winston Churchill. Churchill placed an order of his own: submarines for the Royal Navy to combat German U-boats, and he needed them immediately.

HMS E34, a British E-class submarine in a floating dock. She was commissioned in March 1917, sank the U-Boat UB-16 off

HMS E34, a British E-class submarine in a floating dock. She was commissioned in March 1917, sank the U-Boat UB-16 off

Harwich in the North Sea on 10 May 1918, and was mined near the the Frisian islands on 20 July 1918. The sub was lost with

all the crew. UNITED KINGDOM GOVERNMENTBut Schwab had a problem. Neutrality laws in the U.S. prevented companies from selling weapons to WWI combatants on either side of the trenches. Undeterred, Bethlehem Steel sent submarine parts to an assembling plant in Montreal ostensibly for humanitarian rebuilding efforts—and American steel started leaking into the Allied war effort.

The need to skirt neutrality laws disappeared when the United States officially entered World War I in April 1917. In 1914, when the war was just getting started, the United States produced 23.5 million tons of steel—more than twice its production 14 years earlier. At war’s end in 1918, production had doubled again. American steel gave the Allies a decisive advantage in the fight against the Central Powers.

When the war ended, U.S. steelmaking emerged stronger than ever. Art Deco towers began to sprout up among the New York and Chicago skylines, with the vast majority of the steel coming from two companies: U.S. Steel and Bethlehem Steel. Iconic structures such as Rockefeller Center, the Waldorf-Astoria Hotel, the George Washington Bridge, and the Golden Gate Bridge were built with Bethlehem steel. In 1930, the company’s steel went into the then-tallest skyscraper in the world: the Chrysler Building. Less than a year later, the Empire State Building, with 60,000 tons of steel supplied by U.S. Steel, would reach higher than Chrysler to become the enduring symbol of Manhattan.

But skyscrapers weren’t the only innovation sparked by the explosion in steel production. The material went into a bonanza of cars, home appliances, and food cans. (Two up-and-coming companies, Dole and Campbell’s, were becoming all the rage thanks to the long shelf life of their canned goods.) Bethlehem Steel and U.S. Steel’s assets were valued higher than those of the Ford and General Motors Companies.

It was truly the age of steel—but trouble was not far off.

Following the stock market crash of 1929, steel production slowed as the economy tumbled into the Great Depression. American steelworkers were laid off, but the mills never went completely dark. Railroad tracks still spread across the country, canned food remained popular, and as Prohibition drew to a close, a new steel product emerged: the steel beer can, introduced in the 1930s by Pabst for its Blue Ribbon brew.

Following the Depression, the metal-hungry engines of war again ignited the foundries of the world. Germany moved to occupy land in Denmark, Norway, and France, gaining control of new iron mines and mills. Suddenly, the Nazis were capable of producing as much steel as the United States. In the East, Japan took control of iron and coal mines in Manchuria.

When the attack on Pearl Harbor brought America into World War II, the U.S. government banned production of most steel consumer goods. The industrialized nations of the world, hurtling headfirst into world war, began rationing steel for a select few purposes: ships, tanks, guns, and planes.

The American mills melted metal 24 hours a day, often with primarily female workforces. The economy began to boom again, and soon American steel production was more than three times larger than that of any other country. During World War II, the U.S. manufactured 25 times more steel than it did during World War I. And once again, the steelworks of the New World played a decisive role in the Allies’ victory.

When the war was over at last, the U.S. lifted its ban on steel consumer goods. With more than half the world’s steel now American-made, the markets for cars, home appliances, toys, and reinforcing bars (rebar) for construction were as lucrative as ever. Steel from leftover ships and tanks was melted down in enormous furnaces to be reused in bridges and beer cans.

But overseas, a dire need to rebuild, and the introduction of new steelmaking technology, was about to help foreign steel companies flourish.

The Road to Modern SteelEven with mills churning non-stop during wartime, manufacturers had not yet perfected the art of smelting steel. It would take an idea dreamed up 100 years before the end of WWII to revolutionize the process once more—and ultimately, to dethrone the U.S. as the world’s steel king.

German scientist and glassmaker William Siemens, living in England to take advantage of what he believed to be favorable patent laws, realized in 1847 that he could lengthen the amount of time a furnace held its peak temperature by recycling the emitted heat. Siemens built a new glass furnace with a small network of firebrick tubes. Hot gases from the melting chamber exited through the tubes, mixed with external air, and were recycled back inside the chamber.

It took nearly 20 years for Siemens’ glassmaking furnace to find its way into metallurgy. In the 1860s, a French engineer named Pierre-Emile Martin learned of the design and built a Siemens furnace to smelt iron. The recycled heat kept the metal liquefied for longer than the Bessemer process, giving workers more time to add the precise amounts of carbon-bearing iron alloys that turned the material to steel. And because of the additional heat, even scrap steel could be melted down. By the turn of the century, the Siemens-Martin process, also known as the open hearth process, had caught on all over the world.

MICHAEL STILLWELL

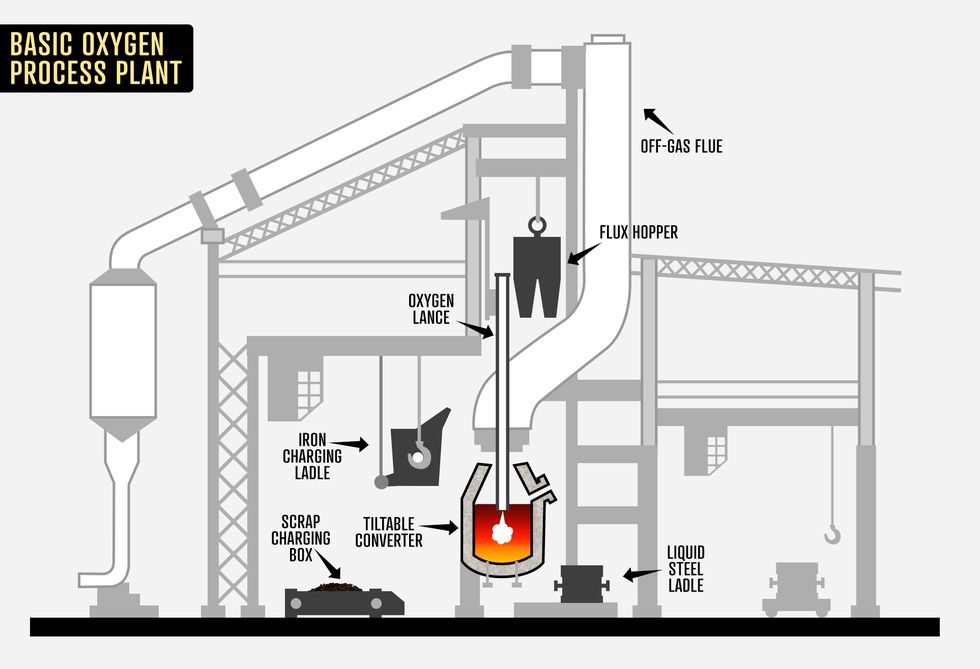

MICHAEL STILLWELLJump forward to the 20th century, when a Swiss engineer named Robert Durrer found an even better way. Durrer was teaching metallurgy in Nazi Germany. After World War II wound down, he moved back to Switzerland and experimented with the Bessemer process. He blasted pure oxygen into the furnace (rather than air, which is only 20 percent oxygen), and found that it removed carbon from the molten iron more effectively.

Durrer also discovered that by blowing oxygen into the furnace from above, rather than below as on a Bessemer Converter, he could melt cold scrap steel into pig iron and recycle it back into the steelmaking process. This “basic oxygen process” separated all traces of phosphorus from the iron, too. The method combined the advantages of both the Bessemer and Siemens-Martin furnaces. Thanks to Durrer's innovations, producing vast quantities of steel became cheaper yet again.

While nations in Europe and Asia immediately adopted the basic oxygen process, American mills, still at the top of the industry, soldiered on using the Siemens-Martin process in confident contentment—unwittingly opening the door for foreign competition.

Stainless Steel and the Decline of the American MillIn 1912, a British metallurgist named Harry Brearly was looking for a way to preserve the life of gun barrels. Experimenting with chromium and steel alloys, he found that steel with a layer of chromium was particularly resistant to acid and weathering.

Brearly started selling the steel-chromium alloy to a friend working in cutlery, calling it “rustless steel”—a literal moniker befitting an engineer. His friend, Ernest Stuart, who needed to sell the knives to the public, came up with a catchier name: stainless steel.

A company called Victoria was forging steel knives for the Swiss Army when it caught wind of the new anticorrosive metal from Great Britain. The company promptly changed the metal in its knives to inox, which is another word for the alloy that’s derived from the French word for stainless, “inoxydable." Victoria rebranded itself as Victorinox. Today, there is a good chance you could find one of their red pocketknives in your desk drawer.

Suddenly stainless steel was all over the world. The anticorrosive, glimmering metal became a critical material for surgical tools and home goods. The hubcaps at the top of the Chrysler Building are made of stainless steel, which helps them retain their silver sheen in the sunlight. In 1959, workers broke ground in St. Louis to build the stainless steel Gateway Arch, which remains the tallest man-made monument in the Western Hemisphere.

The Gateway Arch in St. Louis standing 630 feet tall. DANIEL SCHWEN/WIKIMEDIA

The Gateway Arch in St. Louis standing 630 feet tall. DANIEL SCHWEN/WIKIMEDIA But just as St. Louis was building the Gateway to the West, the rest of the world was catching up with American steel production. Low wages overseas and the use of the basic oxygen process made foreign steel cheaper than American steel by the 1950s, just as the steel industry took a hit from a cheaper alloy for home goods: aluminum.

In 1970, U.S. Steel’s run as the world’s largest steel company ended after seven decades, supplanted by Japan’s Nippon Steel. China became the world’s top steelmaker in the 1990s, and Bethlehem Steel closed its plant in Bethlehem in 1995. It wasn’t until the late 20th century that most American steel mills finally adopted the basic oxygen process. As of 2016, the United States ranked fourth in steel production according to the World Steel Association.

The Sustainable Future of SteelMuch of the world’s stainless steel is made in mini mills. These metalworks do not make steel from scratch, but rather melt down scrap steel for reuse. The most common furnace in a mini mill—the electric arc furnace, also invented by William Siemens—uses carbon electrodes to create an electric charge to melt down metal.

The spread of mini mills in the last half-century was a critical step toward recycling old steel, but there is a long way to go to achieve fully sustainable smelting. Forging steel is a well-known emitter of greenhouse gases. The basic oxygen process, still used widely today, was developed almost a century ago, when the ramifications of climate change were only just entering circles of scientific research. The basic oxygen process still burns coal, emitting about four times more carbon dioxide than electric furnaces. But phasing out the oxygen blasts entirely for the electric arc is not a sustainable solution—only so much scrap steel is available for recycling.

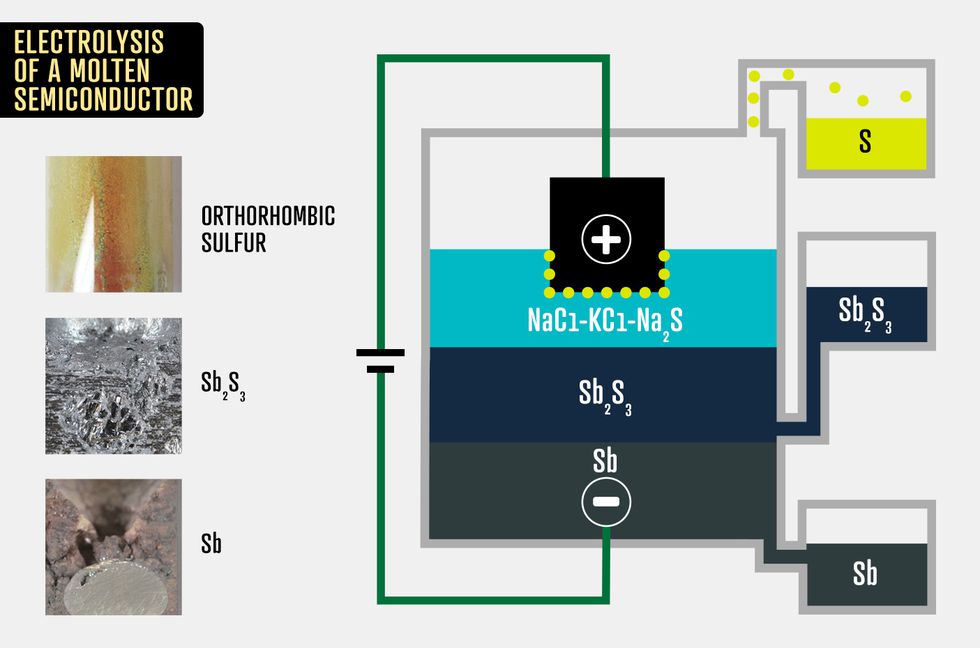

Today, metallurgists are in the early stages of developing eco-friendly steel production methods. At MIT, researchers are testing new electricity-based technologies for smelting metals. These electric smelting techniques have the potential to significantly reduce greenhouse gas emissions if they can be improved to work on metals with higher melting points, such as iron and steel.

A chart of electrolysis of a molten semiconductor. MIT/MICHAEL STILLWELL

A chart of electrolysis of a molten semiconductor. MIT/MICHAEL STILLWELLAdditional ideas that have been used to limit car emissions are being tested as well. Last February, an Austrian manufacturer called Voestalpine began constructing a mill designed to replace coal with hydrogen fuel—technology that is likely at least two decades away. As a stopgap, the Chinese government even enforced limits on its country’s steel output last year.

The stakes have changed in the 21st century. But the question remains the same as it ever was, the same as it was for those manning the crucibles of India, the blast furnaces of Germany, and the foundries of America. How do we get better at making steel?

Source:

www.popularmechanics.com